|

|

||

|

The topics in this note outline the ongoing discussion among companies on how to establish evaluation frameworks, KPIs, and lifecycle management (LCM) principles for AI/ML use cases in 6G. Building on the foundations of 5G NR and 5G-Advanced, participants emphasized the need to broaden the evaluation criteria beyond traditional performance and intermediate metrics to include power consumption, inference latency, training complexity, inter-vendor interoperability, and system overhead. While there was consensus that 5G’s AI/ML frameworks could serve as a starting point, companies debated how much change should be introduced—whether to minimize revisions or embrace a unified, future-proof framework. Proposals also highlighted the importance of ensuring energy efficiency, scalability, and robust data collection mechanisms, while accounting for real-world deployment scenarios and the trade-offs between performance gains and implementation complexity. These summaries capture the early stage of 6GR standardization, where study items are being defined and guiding principles for evaluating AI/ML in 6G are being shaped

Evaluation and KPIsEvaluation in AI/ML-based 5G and 6G design starts from a simple rule — both model-level and system-level aspects must be measured together. A model may show promising accuracy in isolation, but its real value appears only when it improves network-level outcomes such as throughput, latency, and overhead. Therefore, AI evaluation must include metrics for computational complexity, power consumption, inference delay, and robustness across vendors and configurations. The purpose is to verify not only that the model performs well, but also that it operates efficiently and reliably in practical network conditions. A clear baseline is essential for fair comparison. Every AI feature should be tested using the same channel models, antenna setups, and NR reference conditions to ensure results are comparable. KPIs should track complexity in operations per bit, memory usage, and energy per bit. They should also measure how well the model generalizes across frequency bands, deployment types, and mobility levels. Equally important is stability—systems must continue to function gracefully even when the AI is disabled or exposed to unfamiliar data. The evaluation approach should remain flexible but structured. KPI definitions can vary by use case, covering link-level measures such as BLER, EVM, or spectral efficiency when relevant. Inference latency and power efficiency deserve particular focus, since model execution time and energy consumption often determine real-world feasibility. Quantitative limits on inference cost, such as upper bounds in FLOPS or power budgets per operation, help maintain practical balance between performance and efficiency. In summary, AI/ML evaluation for future networks must follow three guiding ideas: realism, consistency, and transparency. Realism ensures testing reflects deployment conditions. Consistency guarantees fair comparison across systems. Transparency exposes both performance gains and associated trade-offs. This balanced framework allows AI techniques to evolve as an integral part of the cellular standard, complementing conventional mechanisms rather than replacing them.

Hybrid ML/non-ML designsThe integration of AI and ML into the physical layer requires balance between innovation and interoperability. The goal is to enhance selected processing stages with learned models without breaking the deterministic and testable nature of classical signal chains. This approach treats machine learning as an assistive layer, not a replacement. Partitioning must be done carefully so that learned blocks add value where statistical adaptation helps, while keeping core signal-processing intact. Each insertion must preserve existing interfaces such as I/Q samples, LLRs, and CSI, allowing legacy and ML-enabled receivers to coexist. At the transmitter, ML aids can support shaping or precoding choices but must remain compliant with emission masks, linearity, and other RF constraints. Runtime confidence checks and fallback paths are essential to ensure stability and reliability. Lifecycle management of models is another key aspect. Training, validation, and adaptation must remain observable and reversible. Each model version needs traceability, calibration records, and rollback triggers. Finally, complexity and power limits define where ML is practical. Lightweight designs should fit within device budgets and deactivate when the gain-to-cost ratio is poor. Verification and interoperability procedures must be clearly defined so mixed ML/non-ML systems can operate together. In summary, the purpose is to insert learning where it helps, contain it where it risks instability, and ensure that all gains are measurable, reversible, and compatible with standard 5G/6G PHY frameworks.

Selection of AI/ML Use CasesThe selection of AI/ML use cases in 5G and 6G standardization follows a disciplined and pragmatic approach. Each company emphasizes measurable performance gains while maintaining compatibility with existing architectures and ensuring implementation feasibility. The focus is not only on improving throughput or accuracy but also on balancing complexity, interoperability, and deployment practicality. Vendors and operators use different but converging criteria. Most require that AI-based solutions outperform classical methods under fair conditions while keeping fallback paths available. Complexity, signaling impact, and energy efficiency are now treated as critical evaluation dimensions, not afterthoughts. Another emerging trend is the need for explainability and runtime assurance. Proposals must demonstrate stability across datasets and scenarios, with performance metrics tied to real system KPIs such as CQI accuracy, beam index reliability, and HARQ success rates. Ultimately, the goal is to identify AI/ML use cases that can bring clear, standardized value—whether as 5G-Advanced extensions or as enablers of new 6G features—while keeping devices affordable, networks interoperable, and operations sustainable.

Lifecycle Management (LCM) FrameworkLifecycle Management of AI/ML in 6G builds on the foundation established in 5G NR but extends it toward a more adaptive, transparent, and intelligent framework. The 5G design mainly supported one-directional model handling, where training occurred offline and deployment followed fixed control flows. For 6G, this approach becomes too restrictive. As AI moves deeper into the radio interface and network intelligence layers, models must be continuously updated, distributed, and validated in real time. This transition raises the key design question — whether to preserve backward compatibility with minimal changes or to enable a complete redesign that supports a wider range of learning and deployment scenarios. The new framework must integrate data and model management, dynamic adaptation to network and UE conditions, and advanced training paradigms such as online learning, federated learning, and meta-learning. It also needs to address hardware and energy efficiency, as AI workloads begin to coexist with conventional signal processing at the base station and UE. Managing inference latency, processing cost, and memory utilization becomes as important as achieving learning accuracy. The LCM design should therefore establish a clear link between algorithmic intelligence and system-level performance, ensuring that learning-driven features remain predictable and explainable in operation. A well-defined lifecycle is essential. Every model must follow a consistent process—training, activation, monitoring, and rollback. Each stage should include operator visibility and control, allowing explainability and accountability within the network. Rollback mechanisms must guarantee deterministic fallback to non-AI baselines whenever models deviate from expected behavior. Standardized signaling—such as model identifiers, validity scopes, and rollback triggers—should be integrated through PHY/MAC hooks. Continuous monitoring of KPIs like BLER, latency, and power efficiency enables automatic fallback or retraining decisions when thresholds are violated. Ultimately, the 6G LCM framework aims to create a unified environment for AI/ML management—scalable, secure, and interoperable across vendors and layers. It should enable multi-node learning, site-specific adaptation, and shared model state while preserving trust through transparent auditing. Rather than treating AI as an external add-on, 6G positions lifecycle management as the core mechanism that allows learning systems to evolve safely, efficiently, and collaboratively within the cellular standard.

Data Collection FrameworkData collection plays a central role in enabling AI/ML-driven intelligence within the 6G system. While the 5G NR framework already supports certain measurement and reporting mechanisms, it was not originally designed with large-scale learning feedback loops in mind. For 6G, the objective is to create a unified, future-proof data collection framework that can serve multiple AI/ML functions across layers and working groups. This involves balancing two goals — ensuring broad applicability across use cases while maintaining flexibility for domain-specific customization. The discussion focuses on whether the framework should remain an extension of existing NR measurement and reporting procedures or evolve into a more general-purpose, learning-oriented data management plane. Such a plane would handle the collection, pre-processing, and exchange of AI-relevant data (e.g., channel state, interference, mobility patterns, resource usage, and hardware-level statistics) in a consistent and interoperable manner. Defining a clear boundary between use case–specific collection (for example, CSI feedback for channel prediction) and general-purpose collection (e.g., cross-layer learning datasets) is essential to avoid overlap and ensure clean coordination among RAN1, RAN2, and SA groups. From a design perspective, the framework must ensure scalability, privacy, and efficiency. Collected data should be structured with standardized content and formats, supporting both real-time and historical analysis. It must also integrate seamlessly with the AI/ML lifecycle management framework, allowing models to be trained, validated, and updated based on continuously gathered field data. This requires alignment across working groups so that the mechanisms for collection, storage, and signaling remain coherent throughout the overall 6G architecture. In summary, the 6G data collection framework aims to move beyond traditional measurement reporting. It should evolve into a unified infrastructure that feeds learning systems while maintaining transparency, interoperability, and system stability. RAN1’s task is to define the scope, structure, and signaling mechanisms of this framework, ensuring that it supports diverse AI/ML use cases while remaining compatible with existing NR principles and forward-looking 6G designs.

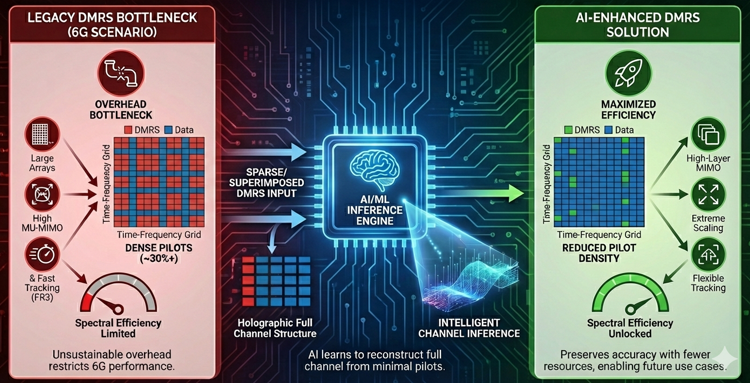

Use CasesAI/ML integration in 6G is expected to extend across the physical layer, control plane, and system operation, transforming how wireless networks sense, predict, and adapt to their environment. Each use case represents a concrete point where intelligence can replace static configuration or rigid signaling with dynamic, context-aware behavior. The objective is not to replace existing NR mechanisms but to enhance them through learning-based prediction, compression, and optimization—always within well-defined control and lifecycle boundaries. The use cases studied in this phase collectively define the design space for AI-enabled RAN functions. They span channel acquisition, beam management, coding, sensing, and energy optimization—each requiring new signaling interfaces, trust indicators, and lifecycle management (LCM) hooks for activation, monitoring, and rollback. At the PHY and MAC layers, AI primarily aims to reduce overhead, improve robustness, and minimize UE complexity, while at the system level, it enhances energy efficiency, mobility handling, and joint communication–sensing functionality. Key directions include smarter channel representation and feedback (AI-assisted CSI acquisition), receiver-side intelligence to reduce pilot overhead, predictive beam and mobility management for faster adaptation, and energy-aware scheduling for sustainable operation. AI-based source–channel joint coding and integrated sensing further blur traditional boundaries between communication and perception. Across all these areas, explainability and fallback remain essential—each AI-assisted function must expose its validity, confidence, and rollback conditions to ensure stable operation and cross-vendor interoperability. In essence, these 6G AI/ML use cases illustrate the gradual shift from static configuration to intelligent adaptation. They define where and how learning can be embedded in the radio system to improve performance while maintaining transparency, control, and compatibility with established NR principles. The AI/ML use cases described in this section are derived from ongoing discussions, technical contributions, and study items presented in 3GPP RAN1. These use cases represent potential areas of exploration and are intended solely to illustrate the range of AI/ML concepts currently being evaluated for future 6G systems. Their inclusion here does not imply endorsement, approval, or prioritization by 3GPP, nor does it mean that these techniques will be adopted, standardized, or realized in any final 6G specification. The ultimate set of features and mechanisms standardized for 6G will depend on future study results, consensus within the working groups, cross-layer alignment, feasibility assessments, and system-level evaluations conducted throughout the standardization process. Therefore, the content in this section should be interpreted as a summary of candidate technical directions and potential use cases under study, rather than a definitive roadmap of features that 6G will ultimately support. Readers should view these descriptions as exploratory and subject to change as the 3GPP standardization process progresses. DMRS ImprovementIn 6G, the role of DMRS becomes a critical bottleneck because the system must support far larger antenna arrays, higher MU-MIMO orders, and more frequent channel tracking demands than in 5G. The conventional DMRS structure was originally designed to be fully orthogonal to data and to scale only up to a certain number of ports, so the overhead in 5G NR already reaches almost thirty percent in some configurations. When this structure is extended to the massive port counts envisioned for 6G, the overhead grows to an unsustainable level and directly limits spectral efficiency. At the same time, operation in upper-mid bands and FR3 requires more frequent and more accurate channel estimation due to faster channel variation, mobility, and positioning requirements, which further increases the demand for reference signals. In this situation, simply adding more DMRS symbols or ports is no longer a practical solution, so 6G needs a way to preserve channel-estimation accuracy while using much fewer pilot resources. This is where AI/ML fits naturally. With an AI-based receiver, the UE can infer the full time-frequency channel structure from sparse DMRS patterns, or even from DMRS partially superimposed onto data, without relying on dense orthogonal pilots. This allows 6G to reduce DMRS density in the time domain, the frequency domain, or the spatial domain, while still maintaining performance at a level comparable to or better than legacy interpolation-based methods. Therefore, AI/ML for DMRS is not a decorative feature but a necessary evolution: it enables high-layer MIMO operation, preserves spectral efficiency under extreme antenna scaling, and provides the flexibility required for new 6G use cases that demand fast, precise, and resource-efficient channel tracking.

MotivationThe main reason AI/ML becomes necessary for DMRS improvement in 6G is that the traditional receivers are no longer capable of extracting accurate channel information when DMRS density is aggressively reduced. In 5G, the channel estimation process depends heavily on orthogonal pilots and structured interpolation across time and frequency. This approach only works well when enough pilots are available and the channel changes slowly enough for linear or MMSE-based interpolation to remain valid. In 6G, none of these assumptions hold. The number of DMRS ports increases dramatically with massive MIMO, the channel coherence shrinks in upper-mid and FR3 bands, and multi-layer scheduling creates irregular pilot patterns that break the neat structure assumed by legacy estimation algorithms. If we try to reduce DMRS overhead using conventional methods, the channel estimation error increases sharply and leads to large BLER and throughput degradation. AI/ML provides a fundamentally different capability: it can learn the underlying structure of the propagation environment and the statistical relationship between sparse pilots and the full channel matrix. This allows the receiver to infer dense channel information from sparse DMRS, or even from superimposed DMRS embedded inside data, something impossible for classical LS/MMSE-based receivers. As a result, AI/ML becomes the only practical way to maintain channel-estimation accuracy while reducing DMRS density in the time, frequency, and spatial domains. It enables high-port, high-layer 6G operation without letting pilot overhead dominate the resource grid, and therefore is an essential component of achieving 6G-level spectral efficiency under massive MIMO and fast-varying channels.

MethodologyIn 6G, the major methodologies for improving DMRS with AI/ML fall into a few clear categories. The first one is AI-based channel estimation using sparse DMRS. Here, only a fraction of the DMRS resources are transmitted in time or frequency, but a neural model—typically a CNN, DnCNN, MLP-Mixer, or Transformer—reconstructs the full channel matrix from these sparse samples. This allows the system to cut DMRS overhead in half or even to one-third while keeping the channel-estimation quality close to or better than conventional MMSE-based processing. The second methodology is AI-based joint channel estimation and equalization, where the receiver does not follow the NR pipeline of “DMRS → CE(Chennel Estimation) → EQ(Equalization) → LLR”. Instead, a neural network takes the received DMRS and data together and directly outputs either equalized symbols or soft bits. This approach handles non-linearities, interference, and sparse pilots much better than the traditional cascaded algorithms. The third methodology is superimposed DMRS (SIP) with AI separation, where DMRS is embedded inside the data REs instead of using dedicated pilot REs. A CNN or similar model then learns to disentangle pilots and data jointly, enabling an entirely different overhead-free reference-signal structure that only becomes practical with ML-based receivers. A fourth important methodology is AI-assisted DMRS pattern design, where models help evaluate or optimize time-domain, frequency-domain, and spatial-domain DMRS distribution, especially when the number of ports grows to 64 or beyond. This allows flexible pilot structures that are not feasible under the strict orthogonality constraints of the NR design. A fifth direction is model-based and hybrid AI receivers, where partial domain knowledge (e.g., LS channel estimates, noise variance, or DMRS structure) is combined with neural refinement to improve robustness. This includes model-driven networks that start from an LS/MMSE baseline and use AI layers to refine the estimates. Finally, there is transformer-based channel prediction and interpolation, which learns temporal and spatial correlation structures and generalizes better to mobility and FR3 environments. All these methodologies share the same intention: reduce the dependence on dense, orthogonal DMRS while achieving channel-estimation quality that satisfies 6G’s massive MIMO and high-mobility requirements.

ModelsFor DMRS-related use cases in 6G, several neural network models are being explored because each model captures a different structure of the wireless channel when only sparse or superimposed DMRS is available. CNN-based models form the core approach since they naturally handle the two-dimensional time-frequency structure of the received grid and can refine LS/MMSE estimates into higher-quality channel maps even when the DMRS pattern is very sparse. Residual networks such as ResNet build on this idea and stabilize deeper architectures, allowing the receiver to learn more complex relationships between scattered DMRS and the full channel response. Transformer models are also highlighted because their attention mechanism captures long-range dependencies across time and frequency, so they are effective in reconstructing channels for mobility scenarios or for DMRS patterns that break the regular NR structure. Recurrent models such as Bi-LSTM focus on temporal evolution and are useful when the channel changes quickly across OFDM symbols, especially in high-mobility or high-frequency cases where DMRS cannot be dense enough. MLP-Mixer models are applied mainly in superimposed-DMRS receivers because they process channel features by mixing information across time and frequency without relying on convolution, which helps separate overlapping pilot and data components. Fully connected networks also appear in lighter modules inside the receiver pipeline where local decisions or refinements are needed. Overall, these model families form the toolbox used in 6G to recover accurate channel information from reduced DMRS overhead, superimposed pilots, or flexible pilot structures that traditional receivers cannot handle.

ChallengesWhen applying AI/ML to DMRS-related functions in 6G, several challenges naturally arise due to the gap between theoretical AI models and practical radio implementations. The first difficulty comes from the highly variable nature of wireless channels, since models trained on specific environments may not generalize well when the propagation condition, mobility pattern, or interference structure changes. This means an AI receiver that performs well in one scenario may degrade sharply in another unless continuous retraining or domain adaptation is supported. Another challenge is the tight processing budget on the UE side; DMRS-based channel estimation must run within strict latency and power constraints, but many of the proposed neural models—especially Transformers and deep CNNs—can require substantial computational resources and memory that may exceed what a handheld device can support. Data availability is another issue: high-quality labeled datasets for sparse DMRS or superimposed-DMRS scenarios are difficult to obtain, and synthetic data may not fully capture real-world impairments such as phase noise, IQ imbalance, hardware nonlinearity, or multi-cell interference. Stability and reliability also become critical, because even a small inference error or unexpected output can propagate into equalization, demodulation, and HARQ, causing sudden BLER spikes that operators cannot tolerate. Finally, integrating AI-based DMRS schemes with existing air-interface procedures, such as HARQ timing, RS coexistence, mobility, and codebook operation, requires careful specification work to ensure backward compatibility and predictable behavior. Together, these points illustrate that while AI/ML enables aggressive DMRS reduction and new pilot structures, it also introduces new layers of complexity that must be addressed before 6G systems can safely depend on AI receivers in commercial deployments.

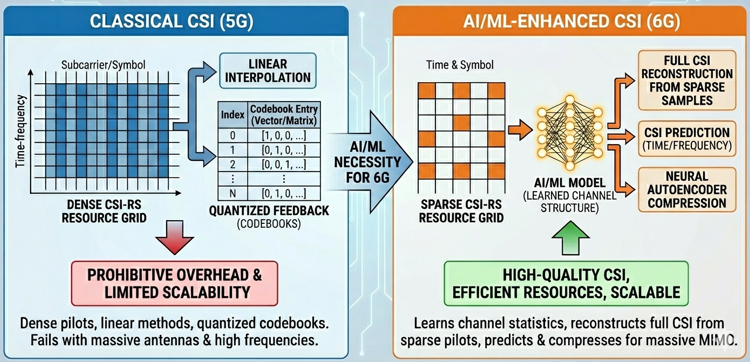

CSI EnhancementIn 6G, CSI enhancement becomes a central use case because the radio interface must support extremely large antenna arrays, wide bandwidths, and diverse propagation environments that make CSI acquisition both more expensive and more critical than in 5G. As systems move into upper-mid bands and FR3, the spatial and temporal channel structure becomes more irregular and highly frequency selective, requiring richer and more accurate CSI to maintain beamforming gain and enable high-order MU-MIMO. However, generating such CSI with the existing NR approach would require dense CSI-RS configurations and large uplink CSI feedback, which leads to excessive overhead on both downlink and uplink. This tension between increasing CSI accuracy requirements and the need to maintain spectral efficiency forces 6G to revisit how CSI is obtained, represented, and fed back. AI/ML provides a new mechanism to discover structure in sparsely sampled CSI and to compress or reconstruct CSI far more efficiently than linear or codebook-based methods. As a result, CSI enhancement through AI/ML becomes a foundational capability for enabling scalable massive MIMO, robust mobility, and energy-efficient operation in 6G.

MotivationThe main reason AI/ML becomes necessary for CSI enhancement is that classical CSI acquisition and feedback mechanisms do not scale with 6G requirements. In 5G, CSI relies on relatively dense CSI-RS patterns, linear interpolation, and quantized feedback via predefined codebooks. These mechanisms assume moderate antenna counts, stable channel statistics, and limited feedback budgets. In 6G, these assumptions no longer hold. The number of antennas and layers increases dramatically, the channel coherence shrinks at higher frequencies, and multi-TRP operation creates spatial inconsistencies that make the existing CSI structures inefficient. If CSI-RS density or feedback precision is increased using traditional methods, overhead quickly becomes prohibitive in both DL and UL directions. AI/ML provides a new alternative by learning the statistical structure of the channel, enabling reconstruction of full CSI matrices from sparse RS samples, predicting CSI across time or frequency, and compressing CSI using neural autoencoders instead of fixed codebooks. This allows 6G to obtain richer CSI while using far fewer radio resources. As a result, AI/ML becomes the only practical way to deliver high-quality CSI for 6G massive MIMO without overwhelming the resource grid or feedback channel.

MethodologyIn 6G, the major methodologies for CSI enhancement with AI/ML can be grouped into a few key categories. The first category is AI-based CSI reconstruction from sparse RS, where only a subset of CSI-RS or other reference signals is transmitted, and a neural model reconstructs the full CSI tensor over time, frequency, and space. This directly reduces downlink RS overhead while keeping CSI quality close to or better than conventional interpolation. The second category is AI-based CSI compression and feedback, where encoder–decoder architectures or JSCC/JSCCM schemes map high-dimensional CSI into compact latent representations that can be fed back with fewer bits than legacy codebooks. A third category is temporal and frequency-domain CSI prediction, where models exploit channel correlation to predict future or unmeasured CSI, reducing the need for frequent RS transmission or large-band measurements. Cross-band and cross-link CSI inference form a fourth category, in which CSI observed on one band, panel, or link is used to infer CSI on another, enabling more efficient multi-band and multi-TRP operation. Finally, there are AI-assisted CSI codebook and report design schemes, where models help tailor feedback structures, quantization strategies, or reporting modes to specific deployment conditions. All these methodologies target the same goal: delivering sufficiently accurate CSI for 6G massive MIMO while minimizing RS and feedback overhead.

ModelsFor CSI enhancement in 6G, several neural network families are considered because each captures a different aspect of the CSI structure across time, frequency, and space. Transformer-based models are particularly attractive for CSI reconstruction and prediction, since attention mechanisms can capture long-range dependencies and irregular sampling patterns in the time–frequency grid. CNNs and ResNet-style architectures are used to exploit local correlations and spatial patterns, making them suitable for 2D or 3D CSI tensors derived from RS measurements. Autoencoder architectures, including convolutional and Transformer-based variants, play a central role in CSI compression and JSCC/JSCCM, where encoder and decoder jointly learn compact latent spaces that preserve the most useful channel features. Recurrent models such as LSTM or Bi-LSTM are applied to sequences of CSI snapshots to track temporal evolution, especially in mobility scenarios. Lighter MLP or MLP-Mixer models can be used in scenarios where complexity and latency must be tightly constrained, or as refinement modules on top of more structured estimators. Together, these model families provide a flexible toolbox to meet different complexity, latency, and performance targets in CSI enhancement.

ChallengesApplying AI/ML to CSI enhancement also introduces a number of challenges that must be resolved before large-scale deployment. Generalization is a primary concern: models trained on specific channel models or deployment scenarios may not perform well when propagation, interference, or mobility patterns differ significantly, requiring mechanisms for continual learning or periodic retraining. Complexity and latency are also critical, especially on the UE side, where CSI reconstruction, compression, or decoding must be completed within tight timing and power budgets. Training data quality and realism pose another challenge, as simulator-generated datasets may not capture all hardware impairments or multi-cell interference present in the field. Robustness and reliability are vital, since erroneous CSI reconstruction or compression can degrade beamforming, scheduling, and link adaptation, leading to throughput loss or instability. Finally, integrating AI-based CSI procedures into existing specification frameworks involves careful design of LCM, capability signaling, fallback behaviors, and coexistence with legacy codebook-based CSI reporting. These challenges highlight that while AI/ML makes richer and more efficient CSI handling possible, substantial engineering and standardization work is needed to ensure safe and predictable operation in 6G networks.

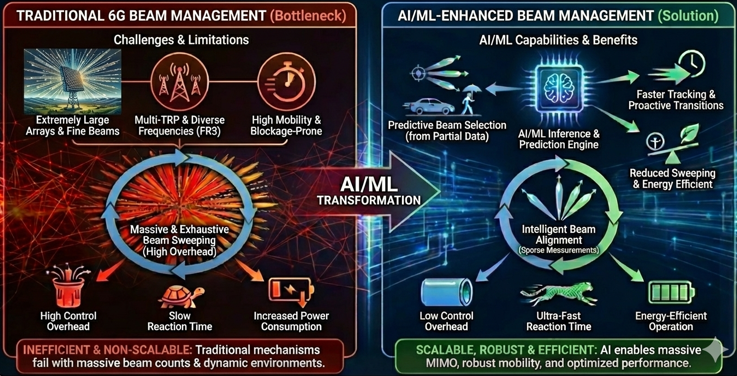

Beam Management EnhancementIn 6G, beam management becomes one of the most demanding procedures because the system must support extremely large antenna arrays, fine-grained beam directions, and multi-TRP operation across diverse frequency ranges including FR3. Compared to 5G, where beams are moderately wide and updated periodically, 6G beams are narrower, more numerous, and must be updated far more frequently to maintain link reliability in high-mobility or blockage-prone environments. Traditional NR beam management relies heavily on periodic beam sweeping, CSI-RS/SSB–based measurements, and fixed beam refinement procedures. These mechanisms become inefficient as the number of beams per TRP grows and the number of TRPs per UE increases. This results in large control overhead, increased power consumption, and slow reaction time. AI/ML enables 6G to infer optimal beams from partial measurements, predict beam transitions before they occur, and reduce the need for exhaustive sweeping. As a result, AI-based beam management becomes a fundamental enabler for scalable massive MIMO, robust mobility, and energy-efficient operation.

MotivationBeam management in 6G requires faster tracking, higher beam resolution, and multi-TRP coordination, all of which significantly increase overhead if classical NR mechanisms are extended directly. SSB sweeping becomes too costly with large beam counts, CSI-RS–based refinement requires too many resources, and feedback of beam quality becomes inefficient as directional granularity increases. Blockage, mobility, and dynamic environments further exacerbate these limitations. AI/ML offers the ability to predict beam direction, infer beam quality from sparse measurements, and select beams without exhaustively testing all candidates. This allows 6G to maintain beam alignment while dramatically reducing sweeping and reporting overhead.

Methodology6G beam management enhancement with AI/ML follows several major approaches. The first is beam prediction, where models learn temporal beam evolution and identify the next expected best beam, reducing reliance on reactive sweeping. The second is beam quality inference using sparse CSI-RS or partial RF observations, allowing the UE or gNB to estimate beam performance without measuring every direction. A third methodology is cross-frequency and cross-TRP beam inference, where beams learned at one band or TRP help infer beams at another, enabling more efficient multi-band operation. Another key method is AI-assisted beam sweeping reduction, where models rank beams before sweeping so that only a small subset must be tested. Beam refinement and fine-tracking also benefit from AI-enhanced interpolation of angular domain information. Together, these methodologies enable scalable and responsive beam management in complex 6G deployments.

ModelsSeveral neural model families are explored for beam management in 6G. Sequence-based models such as LSTM, GRU, and Transformers are used for beam prediction by learning temporal evolution patterns. CNN-based models extract beam-domain or angular-domain features from sparse CSI-RS or RF samples. Autoencoder-type architectures compress and reconstruct beamspace information for ranking or inference tasks. Graph neural networks (GNNs) appear in multi-TRP and multi-panel inference tasks where spatial relationships matter. Lightweight MLP-based models serve as efficient ranking or scoring modules suitable for UE-side inference. Together these models cover prediction, inference, ranking, and refinement functions required for AI-enhanced beam management.

ChallengesApplying AI/ML to beam management poses several challenges. First, generalization is difficult because beam patterns depend heavily on deployment, TRP geometry, environment, and frequency band; models trained in one setting may perform poorly in another. Second, real-time beam prediction and inference must run under strict timing constraints, especially on the UE side. Third, high-quality datasets capturing mobility, blockage, and multi-TRP geometry are difficult to collect, and simulated datasets may lack realism. Fourth, erroneous beam prediction or ranking can lead to beam misalignment, triggering beam failures and throughput collapse. Finally, integrating AI-driven beam management with existing SSB/CSI-RS-based procedures requires clear LCM handling, fallback behavior, and coexistence with legacy NR mechanisms.

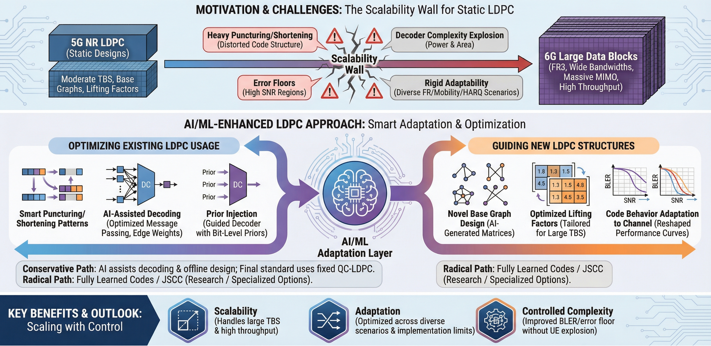

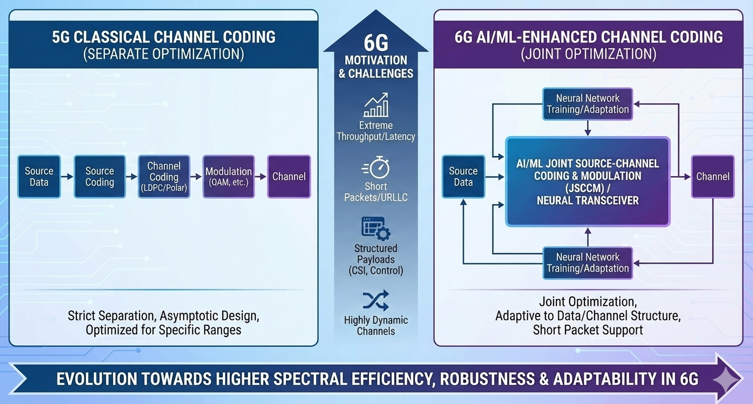

Channel Coding EnhancementIn 6G, channel coding has to support much larger data blocks and much higher peak throughput than NR, while keeping BLER in the same tight range and staying within realistic UE complexity. NR already pushed LDPC and polar codes close to their practical limits, but the basic assumptions were still "moderate" TBS, moderate numbers of layers and ports, and relatively static base graphs. When we move to 6G with very wide bandwidths, FR3 operation, massive MIMO and extremely high data rates, the existing LDPC base graphs and lifting factors start to look rigid. They require heavy puncturing/shortening to fit large TBS, they can show error-floor issues in some regimes, and the decoder complexity can explode if we just increase iterations or add ad-hoc scaling. The RAN1 122bis AI/ML documents already recognize this pressure and explicitly list AI/ML-based channel coding as a 6G use case, with LDPC decoding optimization as the first concrete example. In parallel, RAN1 also shows how AI-predicted bit-level priors can significantly boost convolutional and polar decoders for DCI, giving large BLER gains in some scenarios. This suggests a broader direction: instead of designing entirely new code families from scratch, 6G can keep QC-LDPC as a baseline, but use AI/ML both to optimize long-block LDPC behavior (e.g., edge weights, node scheduling, puncturing patterns) and to guide the introduction of new base graphs and lifting values tailored to large TBS. Overall, "Channel Coding Enhancement" for large data in 6G is less about replacing LDPC and more about letting AI/ML optimize how LDPC is used and how new LDPC structures are designed. In a conservative path, AI primarily assists decoding and offline base-graph design, and the final standard still specifies fixed QC-LDPC matrices and lifting values. More radical directions such as fully learned codes or JSCC-style coding for large PDSCH blocks may remain as research topics or highly specialized options for particular links.

MotivationThe main motivation for AI/ML in channel coding for large data is that static LDPC designs do not scale gracefully when both codeword length and required throughput grow. In NR, the current base graphs and lifting values were tuned for a certain range of TBS, code rates, and layer numbers. They already work close to the limit in many scenarios. When 6G pushes to much larger TBS and higher peak rates, we usually extend the same design by heavier puncturing, shortening, or concatenation. This keeps the implementation simple, but the code structure becomes distorted. Minimum distance gets worse, harmful short cycles appear more often, and the error floor starts to show up exactly in the high-SNR region where 6G wants to operate. At the same time, decoders must run faster and support more parallelism. A straightforward way is to increase the number of iterations or add more ad-hoc tweaks like scaling and damping, but this quickly explodes power and area on the UE side. The problem gets even harder when we consider that 6G will not use a single “typical” channel. It must cover FR1, upper-mid bands, and FR3, different mobility levels, different HARQ strategies, and different segmentation patterns. A single frozen LDPC design with fixed base graphs and lifting values cannot be optimal across all these axes. So we need a way to adapt code behavior to channel conditions, to large-block structures, and to implementation limits. AI/ML gives this missing adaptation layer. It can reshape how messages are passed in the decoder, how priors are injected, and how base graphs and liftings are chosen during design. Therefore the core motivation is simple: if we keep static LDPC, we hit a scalability wall in BLER, error floor, and complexity for large data. If we combine LDPC with AI/ML, we get a realistic chance to keep error performance and complexity under control even as 6G pushes codeword length and throughput far beyond NR.

MethodologyChannel coding enhancement for large data in 6G can be viewed as having two main layers, each addressing a different limitation of the current NR LDPC design. The first layer focuses on improving how we use the existing LDPC structure during decoding, without modifying the underlying parity-check matrix. This is essential because QC-LDPC is extremely hardware-friendly, and any modification to the matrix structure immediately affects memory layout, routing, and parallelization in the decoder. AI/ML provides a way to inject intelligence into the decoding process while preserving full compatibility with existing hardware blocks. The second layer focuses on the offline design of new LDPC base graphs and lifting values specifically optimized for very large transport blocks expected in 6G. This part is more fundamental: instead of stretching NR LDPC beyond its comfort zone using puncturing or shortening, AI/ML can automatically explore the enormous combinatorial space of graph structures, degree distributions, and circulant shifts to find designs that maintain minimum distance, reduce harmful cycles, and stay hardware-friendly at scale. Together, these two layers allow 6G to evolve LDPC in a way that is both backward-compatible and capable of supporting much larger data blocks and much higher throughput than NR.

ModelsIn the large-data regime of 6G, AI-assisted channel coding requires model architectures that can capture the complex statistical structure of extremely long LDPC codewords and the dynamic behavior of belief-propagation decoding under diverse channel conditions. Traditional LDPC decoding relies on local message passing with fixed update rules, but this becomes insufficient when transport block sizes grow, when the channel varies across frequency and time, or when puncturing and shortening create irregular reliability patterns inside the codeword. As a result, the models used in 6G channel-coding enhancement must extract information not only from individual LLRs but also from the broader relationships among bits, parity-checks, and graph topology. Recurrent models such as Bi-LSTM can analyze long sequences of soft information and predict bit-level reliability before decoding begins. Fully connected networks can learn per-iteration or per-edge scaling factors that refine BP message updates without changing the LDPC structure. Graph neural networks (GNNs) can operate directly on the Tanner graph, learning how messages should flow across thousands of nodes, which becomes essential as block sizes increase. Transformer models provide a way to capture long-range dependencies that classical BP cannot handle, while autoencoder-based designs open the door to hybrid coding structures that blend LDPC with learned components. Lightweight CNN or MLP variants act as practical, low-power modules for UE-side implementations, offering small but consistent gains with minimal overhead. Together, these model families form an adaptable toolbox that lets 6G maintain strong error-correction performance even as codeword sizes, throughput targets, and channel variability reach levels far beyond what NR LDPC was originally designed to support.

ChallengesDeploying AI/ML-based channel-coding enhancement for large data in 6G introduces several practical challenges because the decoding process must remain extremely reliable and tightly timed, even as neural components add complexity. Long LDPC codewords already stretch decoder memory, bandwidth, and iteration budgets, and inserting AI-based modules risks exceeding HARQ deadlines or UE power limits. Another difficulty is generalization: models trained on specific SNR ranges, lifting factors, or channel profiles may behave unpredictably when exposed to FR3 mobility, hardware impairments, or interference conditions not seen during training. Producing training data that covers all relevant configurations is expensive, and mismatch between simulated and real-world channels can cause unexpected BLER behavior. Integrating AI models into hardware-friendly QC-LDPC pipelines is also challenging because the decoder demands deterministic behavior, fixed latency, and strict quantization rules. Standardization adds further constraints, since AI-assisted decoding must be reproducible across vendors and cannot depend on model retraining. Finally, operators require explainable and stable behavior—any sudden BLER spikes or non-deterministic decoding outcomes would not be acceptable in commercial networks. These factors make AI-enhanced coding promising, but they also require careful engineering to ensure robustness and practicality.

JSCC / JSCCM EnhancementIn 6G, Joint Source–Channel Coding (JSCC) and Joint Source–Channel Coding & Modulation (JSCCM) are explored as key AI-native techniques to rethink how information is represented over the physical layer. Instead of treating source coding, channel coding, and modulation as strictly separated blocks, JSCC/JSCCM learns an end-to-end mapping from the input information to the transmitted waveform and back, with the channel represented as a fixed layer in between. This paradigm is particularly attractive in 6G for highly structured payloads such as CSI feedback, control information, and short packets, where classical separate designs are inefficient or difficult to optimize. It also fits naturally with two-sided AI/ML use cases discussed in the RAN1 documents, where both transmitter and receiver can be redesigned jointly under a common learning framework. As a result, JSCC/JSCCM becomes a central use case for AI-enhanced PHY, enabling better robustness and compression of critical information under realistic 6G channel conditions.

MotivationThe motivation for JSCC/JSCCM in 6G comes from the limitations of the conventional “separation principle” when applied to short-blocklength, structured, or highly constrained feedback scenarios. Traditional designs first apply source compression, then channel coding, then modulation, assuming ideal interfaces between these layers and asymptotically long blocks. In 6G, many key payloads such as CSI, beam-related feedback, or DCI are short, highly structured, and extremely sensitive to delay and error. Under these conditions, separate design leads to suboptimal use of bits and radio resources, and the overhead of protecting and quantizing such information can become a bottleneck. JSCC/JSCCM addresses this by allowing the system to learn redundancy and symbol mapping jointly, directly over the physical channel model, and by tailoring the representation to the actual statistics of the payload and the channel. This provides a path to reduce overhead for CSI/feedback, improve robustness in harsh channels, and enable new types of PHY-level representations, including for ISAC or other 6G-advanced scenarios.

MethodologyThe JSCC/JSCCM methodologies considered for 6G in the RAN1 discussions typically model the physical layer as an autoencoder, where the encoder corresponds to the transmitter-side mapping and the decoder to the receiver-side reconstruction, with the channel inserted as a non-trainable layer. For JSCC, the encoder operates on digital payload representations (e.g., CSI matrices or control bits) and outputs a sequence of channel symbols, while the decoder reconstructs the payload from noisy received symbols. For JSCCM, the mapping is extended down to the modulation level, allowing the constellation and symbol statistics themselves to be learned rather than fixed. Variants exist where JSCC/JSCCM is used only for specific sub-blocks (e.g., CSI feedback fields) while other parts of the system remain conventional. The training process can include multiple channel conditions, SNRs, and interference profiles so that the learned mapping is robust across a range of deployment scenarios. Some proposals also integrate JSCC/JSCCM with higher-layer coding (e.g., FEC used as an outer code) to combine the benefits of learned inner mappings with the robustness of standardized outer codes.

ModelsJSCC/JSCCM use cases rely primarily on autoencoder-style neural architectures. Encoder and decoder blocks can be implemented using fully connected networks, CNNs, or Transformer-like modules, depending on the structure of the payload (e.g., matrix-shaped CSI or vectorized control fields). For CSI-oriented JSCC, CNNs and attention-based models are useful to capture spatial and frequency correlations, while Transformers are effective when the payload can be viewed as a sequence. Variational autoencoders (VAEs) or other latent-variable models may be used to enforce compact, well-structured latent spaces for better compression and robustness. In some proposals, JSCC is combined with JSCCM through multi-branch or multi-head architectures, where different latent dimensions map to different parts of the signal or spectrum. Lightweight decoders and quantization-aware training are considered when JSCC decoding must run on UE hardware under tight complexity constraints.

ChallengesDespite its promise, JSCC/JSCCM also introduces several challenges for 6G deployment. Reliability and predictability are major concerns, because JSCC/JSCCM changes the basic relationship between bits and waveforms, making it harder to analyze performance using classical coding theory. Interoperability is another key issue: both ends of the link must share the same learned mapping, so LCM procedures, versioning, and fallback behavior must be clearly defined. Complexity and latency can be higher than in conventional separated designs, especially if the encoder and decoder are large. Training robustness is also critical; models must perform well over a wide range of SNRs and channel conditions, including those not perfectly represented in the training set. Finally, aligning JSCC/JSCCM with standardization processes requires careful identification of which use cases justify two-sided learning and how these schemes coexist with legacy, bit-level interfaces and code structures.

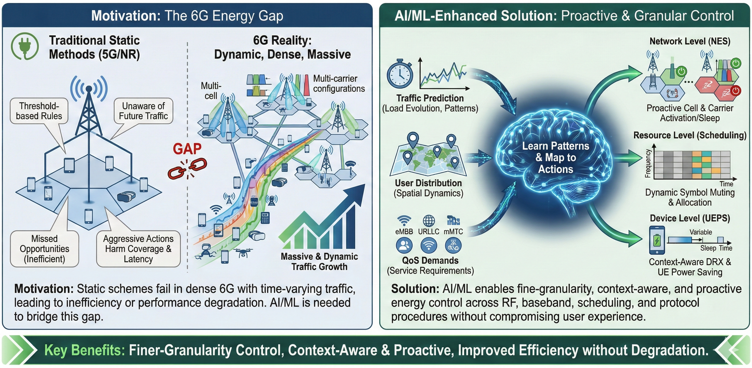

Energy Efficiency EnhancementIn 6G, energy efficiency becomes a primary design objective rather than a secondary optimization, because networks must support massive traffic growth, dense deployments, and new service types while keeping operational and environmental costs under control. Traditional mechanisms for reducing power consumption, such as static cell switch-off, fixed DRX configurations, and simple load-based sleep modes, are no longer sufficient when traffic is highly dynamic across time, frequency, and space. 6G must coordinate energy-saving actions across multiple layers, including RF, baseband, scheduling, and protocol procedures, without causing unacceptable degradation in coverage, latency, or user experience. AI/ML provides a way to learn traffic patterns, load evolution, and channel characteristics, enabling more precise and proactive control of when and where resources are activated or put into low-power states. As a result, AI-enhanced energy efficiency is positioned as a key use case in 6G, closely tied to features such as Network Energy Saving (NES) and UE Power Saving (UEPS).

MotivationThe motivation for applying AI/ML to energy efficiency in 6G arises from the growing gap between static energy-saving schemes and the complex, time-varying behavior of real networks. Existing techniques often rely on threshold-based rules that are not aware of future traffic, mobility patterns, or service requirements. This can lead either to missed energy-saving opportunities or to aggressive power-saving actions that harm coverage, throughput, and latency. In dense 6G deployments with many cells, beams, carriers, and frequency bands, the number of possible energy-saving configurations becomes very large, and exploring them manually or with simple heuristics is impractical. AI/ML can learn to predict traffic load, user distribution, and QoS demands, and can map these predictions to energy-control actions such as cell and carrier activation, symbol muting, and DRX parameter selection. This enables finer-granularity and more context-aware energy-saving behavior than traditional methods can provide.

MethodologyThe methodologies for AI-based energy efficiency in 6G can be divided into several categories. The first is traffic- and load-prediction driven control, where models forecast future cell or beam load and then activate or deactivate resources accordingly, including carrier on/off, symbol muting, and sleep modes for RF chains and baseband units. The second is AI-based NES policy optimization, where the network learns policies for switching cells, sectors, or beams into low-power states while preserving coverage and handover performance. A third methodology is UE power-saving enhancement, in which DRX and other UE-side configurations are tuned based on predicted traffic patterns and application behavior to minimize wake-ups and unnecessary measurements. Another direction is joint energy- and performance-aware scheduling, where schedulers are trained to choose resource allocations that balance throughput, latency, and power, for example by concentrating activity in shorter time windows to increase sleep opportunities. Finally, cross-layer energy coordination leverages AI/ML to align PHY/MAC actions with higher-layer policies such as slicing or service prioritization.

ModelsFor energy efficiency use cases, several AI/ML model types are especially relevant. Time-series models such as LSTM, GRU, and temporal convolutional networks are used for traffic and load prediction across cells, carriers, or beams. Reinforcement learning (RL) and deep RL algorithms are employed to learn NES and UEPS policies that balance energy savings against QoS degradation, often using reward functions that combine power, throughput, and latency. Graph neural networks can represent the spatial relationships between cells and beams in dense networks, enabling coordinated energy decisions across multiple nodes. Lightweight MLP or decision-tree-based models may be used for on-device DRX configuration or simple policy decisions where complexity must be minimized. In some cases, hybrid approaches combine prediction models with RL-based policy layers to separate forecasting from decision-making.

ChallengesAI/ML-based energy efficiency in 6G faces several important challenges. First, incorrect or overly aggressive energy-saving actions can create coverage holes, increase call drops, or violate latency and reliability targets, so policies must be conservative and well-validated. Second, training data for energy use cases must capture a wide range of traffic patterns, including rare peaks and special events, otherwise models may fail under unusual load. Third, the interaction between energy-control policies and other RAN functions such as mobility, scheduling, and interference management is complex, making it difficult to predict the full system impact of a given policy. Fourth, implementing RL or complex models for NES at scale raises questions about stability, convergence, and explainability, which operators require before trusting automated control. Finally, standardization must define how AI-controlled energy features expose their capabilities, report their states, and fall back to safe default behavior when models are unavailable or uncertain.

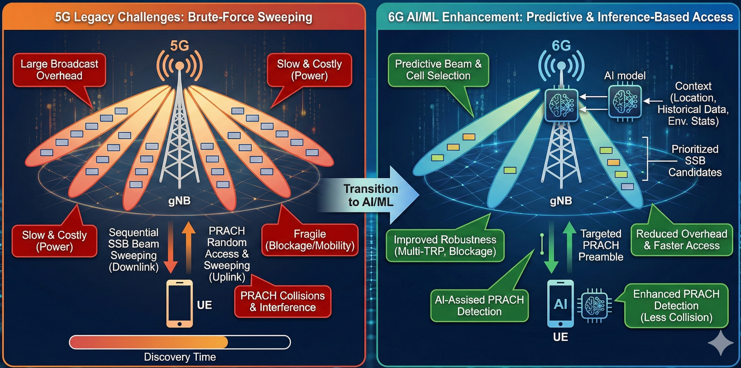

Initial Access EnhancementIn 6G, initial access must cope with much narrower beams, larger antenna arrays, and operation over wider frequency ranges, including FR3, than in 5G. The legacy NR approach relies heavily on SSB-based cell search and PRACH-based random access, with beam sweeping on the downlink and potentially on the uplink. As the number of beams, cells, and carriers increases, exhaustive or semi-exhaustive sweeping becomes too slow and too costly in terms of overhead and UE power. In addition, blockage, mobility, and multi-TRP deployments make the initial access process more fragile, since the best beam or TRP may change quickly or be temporarily unavailable. AI/ML enables 6G to move from brute-force sweeping to predictive and inference-based initial access, where the network and UE use prior information and learned models to narrow down beam and cell candidates, detect PRACH more robustly, and reduce access delay. As a result, initial access enhancement with AI/ML becomes a key use case for improving both user experience and network efficiency in 6G.

MotivationThe motivation for applying AI/ML to initial access arises from the scaling limitations of classical NR procedures when extended to 6G. SSB and CSI-RS sweeping over many narrow beams induces large broadcast overhead and long discovery times, especially when multiple carriers or TRPs must be searched. PRACH detection and preamble multiplicity estimation also become more challenging in dense deployments, where collisions and interference increase. At the same time, 6G aims for faster access, better coverage in challenging environments, and support for a wide variety of device types, including low-power and high-mobility UEs. Static initial access configurations and simple threshold-based detection are not enough to meet these requirements. AI/ML can incorporate context such as location, historical measurements, and environment statistics to prioritize beams and cells, predict which TRPs are likely to serve a UE, and improve PRACH detection under interference and multi-path. This allows initial access to become more targeted, faster, and more robust.

MethodologyAI-based initial access enhancement in 6G can be organized into several major methodologies. The first is beam and cell prediction for IA, where models use historical CSI, location, and mobility patterns to predict the most likely serving beams or cells for a UE, thereby narrowing the search space before SSB or CSI-RS sweeping. The second is AI-based PRACH reception and multiplicity detection, using neural receivers to detect weak preambles and distinguish multiple UEs transmitting the same preamble under interference and noise. A third methodology is context-aware IA configuration, where AI/ML maps UE or environment features (e.g., time-of-day, traffic patterns, mobility class) to IA parameters such as SSB periodicity, beam sweeping order, and PRACH resources. Another direction is cross-frequency IA assistance, where information from legacy bands or previous connections is used to inform beam or cell selection at higher frequencies, including FR3. Together, these approaches aim to accelerate IA, reduce mis-detections and false alarms, and lower the UE and network energy cost of entering the system.

ModelsThe models used for initial access enhancement in 6G reflect the sequential and spatial nature of the problem. Sequence models such as LSTM, GRU, and Transformers are useful for beam and cell prediction, since they can learn patterns in historical CSI, beam indices, or mobility traces. CNN-based models are well-suited for PRACH reception, where the time–frequency structure of the received signal can be treated as an image-like grid for detection and multiplicity estimation. Lightweight MLP models can be employed for context-to-configuration mapping, translating simple features into IA parameter settings. In some proposals, hybrid models combine location-based features (e.g., coarse positioning information) with CSI features to improve prediction accuracy. The choice of model depends on where the inference runs (gNB or UE) and on the available compute resources and latency constraints.

ChallengesAI/ML-based initial access also faces several challenges that must be addressed for 6G deployment. One key issue is generalization: models trained for IA in a given environment or deployment may not perform well when cell layouts, blockage patterns, or UE distributions change. Another challenge is timing—IA procedures operate under tight latency constraints, so beam prediction and PRACH detection must run quickly enough not to delay access. Data collection for training can be difficult, especially for rare IA failure modes or edge cases such as sudden blockage or extreme mobility. Mis-prediction of beams or cells can lead to longer access times, repeated RA attempts, or even access failures, so robust fallback strategies to classical NR IA are needed. Finally, integrating AI-based IA with existing RRC, NAS, and higher-layer procedures requires careful definition of capability signaling, measurement reporting, and model lifecycle management.

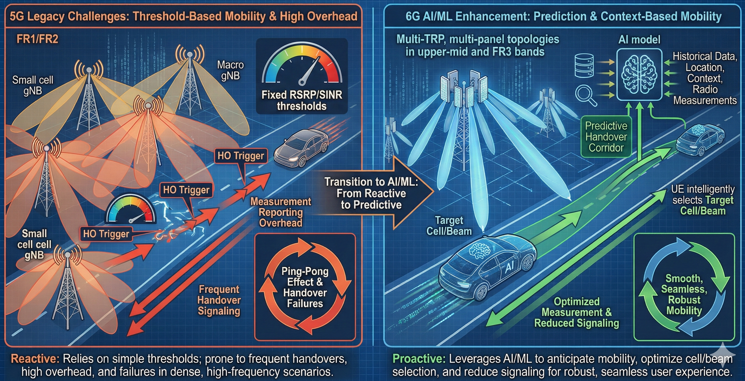

Mobility EnhancementIn 6G, mobility procedures must operate under much more complex conditions than in 5G: denser deployments, multi-TRP and multi-panel topologies, operation in upper-mid bands and FR3, and tighter requirements on both reliability and latency. Classical handover, measurement, and cell/beam selection mechanisms were designed around relatively simple thresholds and event triggers, assuming moderate cell densities and limited beam counts. As networks evolve toward extremely dense, multi-layer architectures with narrow beams and dynamic traffic patterns, these mechanisms can lead to excessive measurement overhead, ping-pong events, and handover failures. AI/ML enables 6G to move from purely threshold-based mobility to prediction- and context-based mobility, where the network and UE leverage learned models to anticipate mobility events, optimize measurement configurations, and select cells and beams more intelligently. As a result, mobility enhancement with AI/ML is considered a key use case, especially in the context of high-frequency and high-speed scenarios.

MotivationThe motivation for applying AI/ML to mobility comes from the limitations of conventional handover and reselection procedures when extended to dense, beam-centric 6G deployments. Legacy mechanisms rely on RSRP/RSRQ/SINR thresholds and hysteresis-based events (e.g., A3, A5) with static or semi-static measurement configurations. In dense deployments, this can result in frequent event triggering, large measurement reporting overhead, and suboptimal target-cell selection, particularly for UEs in high mobility or at cell edges. In upper-frequency bands, the environment changes rapidly due to blockage and fast fading, making it difficult to maintain stable coverage using simple rules. AI/ML can exploit historical mobility traces, radio measurements, and context (location, time-of-day, traffic) to predict future serving cells or beams, adjust measurement and reporting behavior, and anticipate handover needs. This allows 6G to reduce unnecessary measurements and signaling while improving handover robustness and user experience.

MethodologyAI-based mobility enhancement methods in 6G can be grouped into several categories. The first is handover prediction and target-cell/beam recommendation, where models use UE measurement histories, location estimates, and network context to predict when a handover is needed and which candidate cells or beams are most suitable. The second is adaptive measurement configuration, where AI/ML selects or tunes measurement objects, report types, filtering, and periodicities based on UE mobility state, service type, and environment, thereby reducing unnecessary measurements while maintaining HO reliability. A third methodology is AI-assisted cell and beam reselection, especially for idle mode, where the network or UE learns preferred cells for different locations and times to optimize camping and reselection behavior. Another direction is mobility robustness optimization, where models analyze HO outcomes (e.g., failures, ping-pongs, radio link failures) and automatically adjust parameters or policies to reduce such events. Finally, AI can be used for multi-TRP and multi-layer mobility coordination, selecting appropriate TRP/beam combinations for serving and secondary links in advanced dual-connectivity or multi-TRP scenarios.

ModelsMobility-related AI/ML models must handle temporal, spatial, and contextual information. Sequence models such as LSTM, GRU, and Transformers are natural fits for handover prediction and mobility state classification, since they can learn patterns in measurement reports, beam indices, and HO histories. Time-series forecasting models can predict future signal levels or cell quality to support proactive HO decisions. For location- and topology-aware mobility, graph neural networks can model cell adjacency, TRP relationships, and UE–cell associations. Lightweight MLPs or tree-based models are suitable for parameter selection and mobility robustness optimization where interpretability and low complexity are important. In some proposals, hybrid models combine location, CSI, and higher-layer KPIs as features to provide more accurate and robust mobility decisions.

ChallengesAI/ML-based mobility enhancement in 6G also faces several important challenges. One major issue is generalization: mobility patterns can differ substantially between cities, deployments, and frequency bands, so models trained in one environment may not transfer well to another without adaptation. Another challenge is the impact of mis-prediction; incorrect handover or reselection suggestions can lead to additional HOs, RLFs, or degraded throughput, especially for high-speed UEs. Data collection for training must respect privacy constraints while still capturing enough detail about UE trajectories and measurements. Real-time constraints also apply, since mobility-related inference often needs to run within tight timing budgets in the gNB or near-RT RIC. Finally, integration with existing mobility procedures requires clear definitions for capability signaling, fallback behavior to classical HO, and lifecycle management of mobility models.

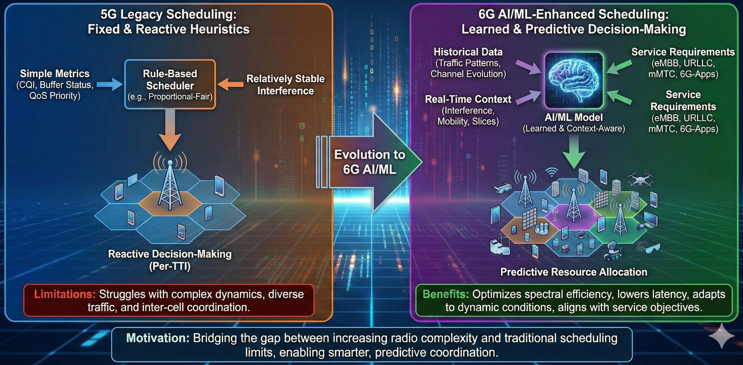

Scheduling / Resource Allocation EnhancementIn 6G, scheduling and resource allocation must orchestrate a far more complex radio environment than in 5G: extremely dense deployments, wideband carriers including FR3, massive MIMO with many layers, and diverse services ranging from eMBB to URLLC, mMTC, and new 6G-specific applications. Classical schedulers in NR are largely based on rule-based heuristics and relatively simple metrics such as CQI, buffer status, and QoS priority. These mechanisms can struggle to capture the full dynamics of interference, traffic bursts, mobility, and slice-specific policies in future 6G networks. AI/ML enables scheduling to move from fixed algorithms to learned decision-making that can adapt to traffic patterns, channel evolution, and service requirements, improving both spectral efficiency and user experience. As a result, scheduling and resource allocation enhancement is a central AI/ML use case in 6G.

MotivationThe motivation for AI/ML-based scheduling in 6G arises from the increasing gap between the complexity of the radio environment and the expressiveness of traditional scheduling rules. Legacy schedulers typically rely on proportional-fair or similar metrics computed per TTI, assuming relatively stable interference, homogeneous traffic profiles, and limited coordination across cells or TRPs. In dense 6G deployments, interference patterns fluctuate rapidly, traffic demands vary widely across users and slices, and coordination between cells, beams, and carriers becomes more important. Fixed heuristics may fail to capture these multi-dimensional trade-offs, leading to suboptimal throughput, latency, and fairness. AI/ML can learn from historical traffic and channel data to predict near-future conditions, identify beneficial scheduling patterns, and align resource allocation with service-level objectives. This allows schedulers to become predictive and context-aware rather than purely reactive.

MethodologyAI-based scheduling and resource allocation methods in 6G can be grouped into several categories. The first is reinforcement-learning-based (RL) scheduling, where the scheduler is modeled as an agent that selects RB, time, beam, or MCS allocations to maximize a long-term reward combining throughput, latency, and fairness. The second is supervised or imitation-learning-based scheduling, where AI models learn to mimic or improve upon expert-designed policies using historical data. A third methodology is traffic- and QoS-prediction-driven scheduling, where models forecast buffer evolution, packet arrivals, or latency deadlines and feed these predictions into otherwise conventional schedulers as enhanced inputs. Another direction is multi-cell and multi-TRP coordination, where AI/ML helps coordinate scheduling decisions across neighboring cells or TRPs to reduce interference and support joint transmission. Finally, cross-layer scheduling uses AI/ML to align MAC-level resource allocation with higher-layer objectives, such as slice SLAs, application KPIs, or energy-saving targets.

ModelsScheduling and resource allocation tasks use a variety of AI/ML models depending on the problem formulation. For RL schedulers, deep Q-networks (DQN), policy-gradient methods, and actor–critic architectures such as DDPG, PPO, or A3C are commonly considered. For supervised and imitation learning, MLPs and gradient-boosted trees are often used due to their balance between accuracy and interpretability. Time-series models such as LSTM, GRU, and temporal convolutional networks (TCN) are applied for traffic and QoS prediction. Graph neural networks (GNNs) can represent multi-cell topologies and interference relationships, enabling coordinated scheduling across cells and TRPs. Lightweight models may be deployed in near-RT RIC or gNB for fast inference, while more complex models can run in non-RT RIC to generate policies or configuration parameters offline.

ChallengesAI/ML-based scheduling in 6G introduces several challenges. First, stability and fairness are critical: small changes in scheduling policy can significantly impact user experience, so learned policies must be carefully validated before deployment. Second, obtaining representative training data for RL or supervised learning is difficult, especially for rare events or extreme load conditions. Third, real-time constraints at the gNB or near-RT RIC limit the complexity and inference time of deployed models. Fourth, explainability is important for operators, who must understand why certain scheduling decisions are made, particularly when SLAs or regulatory constraints are involved. Finally, integrating AI-based schedules with existing NR/6G MAC procedures and standardization frameworks requires clear definitions for capability signaling, policy updates, and fallback to classical schedulers when needed.

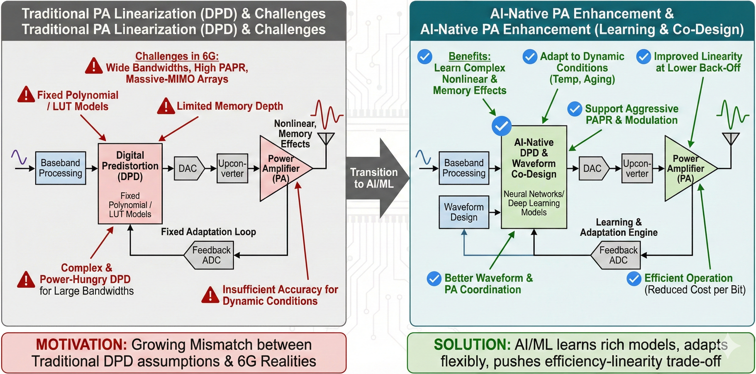

Power Amplifier (PA) EnhancementIn 6G, power amplifier (PA) enhancement becomes critical because radio units must handle much wider bandwidths, higher peak-to-average power ratios (PAPR), higher-order modulation, and large antenna arrays while still meeting tight spectral-emission and efficiency requirements. Traditional PA design and digital predistortion (DPD) techniques were developed mainly for single-carrier or limited-bandwidth OFDM scenarios, with relatively simple behavioral models and fixed adaptation loops. As 6G moves into upper-mid bands and FR3, uses larger aggregated bandwidths, and deploys many power amplifiers in massive-MIMO arrays, classical DPD solutions can become too complex, too power-hungry, or insufficiently accurate. At the same time, operators are under pressure to improve energy efficiency and reduce the cost per bit, which requires operating PAs closer to saturation without violating linearity and spectral-mask requirements. AI/ML provides new ways to learn PA characteristics, compensate nonlinearities, and co-design waveform and PA behavior, enabling 6G systems to push the efficiency–linearity trade-off further than conventional methods.

MotivationThe motivation for applying AI/ML to PA enhancement in 6G arises from the growing mismatch between traditional DPD assumptions and the realities of wideband, multi-antenna transmitters. Classical PA linearization methods often rely on polynomial or lookup-table models with limited memory depth, tuned for relatively narrowband operation and slowly varying conditions. In 6G, PAs must cope with very wide instantaneous bandwidths, carrier aggregation, dynamic spectrum use, and a larger range of operating points as traffic and beam patterns evolve. This increases both the dimensionality and variability of the nonlinearity to be compensated. Moreover, massive-MIMO and distributed RU deployments multiply the number of PAs, making per-PA calibration and adaptation difficult with purely hand-crafted models. AI/ML can learn rich nonlinear input–output relationships with memory effects, capture temperature and aging behavior, and adapt more flexibly to changing conditions. This enables improved linearity at lower back-off, support for more aggressive PAPR and modulation schemes, and better coordination between waveform design and PA operation.

MethodologyPA enhancement with AI/ML in 6G can be organized into several main methodologies. The first is AI-based PA behavioral modeling, where neural networks learn the nonlinear, memory-dependent mapping from baseband input to RF output across different operating conditions. These models can then be used either directly in DPD design or as references for online adaptation. The second methodology is AI-based digital predistortion, in which the predistorter itself is implemented as a neural network that is trained to invert the PA response and minimize distortion at the antenna port. A third methodology is PAPR- and waveform-aware optimization, where AI/ML helps design or select waveforms and shaping parameters that are more PA-friendly while meeting spectral and performance constraints. A fourth direction is PA health and aging monitoring, using models to detect drift, degradation, or anomalies in PA behavior and trigger recalibration or maintenance. Finally, AI can support coordination across multiple PAs in massive-MIMO arrays, learning joint relationships that affect overall beam quality and out-of-band emissions.

ModelsVarious neural architectures can be applied to PA enhancement, each suited to different aspects of the problem. Feedforward MLPs and deep fully connected networks are commonly used for static or mildly dynamic nonlinear mappings. For PAs with significant memory effects, temporal models such as tapped-delay MLPs, temporal CNNs, or recurrent networks (LSTM, GRU) can better capture the dependence on past samples. Volterra-inspired neural structures or basis-function networks can approximate classic PA models while adding learning flexibility. CNNs can also be used when modeling wideband signals in the time–frequency domain. For multi-PA and array-level coordination, graph neural networks may be employed to represent the coupling between PAs and the spatial structure of the array. In all cases, model complexity must be chosen carefully to fit within the real-time constraints of baseband processing.

ChallengesAI/ML-based PA enhancement in 6G also faces important challenges. One central issue is the need for very high reliability: PA linearization errors directly affect spectral compliance and can cause interference beyond the licensed band, so any learned model must be robust and thoroughly validated. Another challenge is the scarcity and cost of high-quality training data, since collecting accurate PA input–output pairs across operating conditions, temperatures, and aging states can be time-consuming and hardware-intensive. Complexity and latency constraints in the RU and baseband limit the size and depth of deployable models, particularly for per-sample DPD processing. Generalization across manufacturing variations, frequency bands, and deployment environments is also non-trivial: models trained on one PA or batch may not work optimally on another without adaptation. Finally, integrating AI-based PA control into standardization and RAN4 testing requires clear procedures for model validation, performance guarantees, and fallback to conventional DPD schemes when needed.

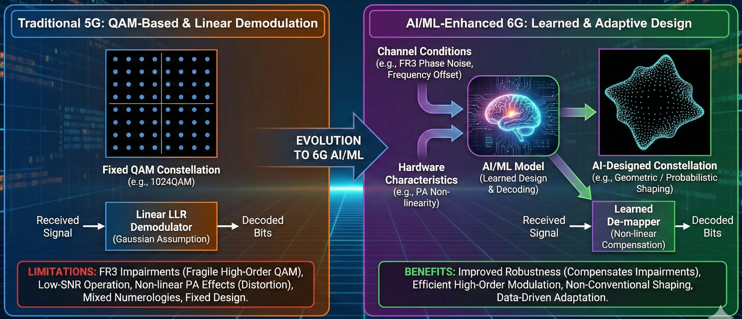

Modulation / Demodulation EnhancementIn 6G, modulation and demodulation design must support higher spectral efficiency, wider bandwidths, and more challenging channel conditions than 5G. Traditional QAM-based constellations and linear demodulators struggle under FR3 impairments, low-SNR operation, non-linear PA effects, and mixed numerologies. AI/ML provides new ways to design, adapt, and decode modulation schemes by learning directly from channel conditions and hardware characteristics. This enables improved robustness, efficient high-order modulation, and non-conventional constellation shaping suitable for future 6G deployments.

MotivationThe main motivation for AI/ML-based modulation and demodulation enhancement in 6G comes from the growing mismatch between classical QAM designs and real-world 6G operating environments. High-order QAM constellations (e.g., 1024QAM, 4096QAM) become extremely fragile under FR3 phase noise, frequency offset, and PA non-linearity. Traditional LLR demodulators assume linear Gaussian channels and cannot exploit the true statistics of hardware impairments or non-linear distortion. Furthermore, probabilistic and geometric shaping require optimized constellation distributions that are difficult to design analytically. AI/ML provides a data-driven pathway to design constellations, learn demappers, and compensate complex impairments that classical analytical methods cannot handle effectively.

MethodologyAI/ML-based modulation and demodulation enhancements can be categorized into several methodologies. The first is learned geometric shaping, where neural models optimize constellation point positions for robustness and capacity. The second is probabilistic shaping with AI-based distribution learning, improving shaping gain by matching symbol distributions to channel statistics. Another methodology is learned demapping, where neural demodulators replace or augment LLR computation using channel-aware and hardware- aware models. A fourth methodology is joint optimization of modulation with PA/DPD models, enabling constellations tailored to transmitter non-linearity. Finally, end-to- end learning frameworks treat modulation/demodulation as part of an autoencoder system, enabling jointly optimized symbol designs.

ModelsSeveral model classes are used in AI-based modulation and demodulation. MLP-based models are commonly used for geometric shaping and simple neural demappers. CNNs and attention-based architectures help process time–frequency features for demodulation under distortion. Variational autoencoders (VAEs) and latent-space models help design structured constellations. End-to-end autoencoders combine encoders (modulation) and decoders (demodulation) with a channel layer. Lightweight models are needed for UE-side demodulation under tight complexity constraints.

ChallengesAI-based modulation and demodulation introduce several challenges. First, constellation design and neural demodulation must remain interpretable and meet strict RAN4 performance requirements for EVM, ACLR, and BER. Second, learned constellations must generalize across SNRs, channels, and hardware variations. Third, complexity constraints at the UE side limit the size of deployable demodulators. Fourth, integrating learned constellations into standardized bit-mapping and rate-matching procedures requires careful design. Finally, two-sided learning may require LCM procedures for updating transmitter/receiver models.

Reference

|

||